The Lobster That Broke the Internet And Maybe Security

OpenClaw exploded to 80K stars in a week and exposed the promise and risk of autonomous AI agents. Real CVEs, real exploits, and what defense actually looks like when your AI assistant can do things.

AI agents that actually do things are here. And the fight isn't about who has the smartest model anymore. It's about who controls the layer where AI actually gets work done.

Last week, a lobster-themed AI project caused a buying panic for Mac Minis.

In seven days, OpenClaw went from zero to 80,000+ GitHub stars. It spiked Google Trends. It reportedly moved Cloudflare's stock price.

And it exposed why autonomous AI agents are both the future we've been waiting for and a security nightmare nobody's prepared to handle.

KB

What is OpenClaw?

An AI agent that runs on your computer but connects to your real digital life: email, WhatsApp, calendar, files.

It uses large language models (the AI behind ChatGPT and Claude) to execute complex tasks on its own.

Remember when Siri and Alexa were supposed to be personal assistants? And both were... useless? OpenClaw is what we thought we were getting 10 years ago.

You give it a goal. It breaks it into steps. It uses your actual accounts to execute. It adapts when things fail. You get a notification when it's done.

The story that made it go viral: A developer wanted a restaurant reservation. OpenTable didn't have availability. OpenClaw found the restaurant's phone number, downloaded a voice AI tool, called the restaurant, booked the reservation, and added it to the calendar.

Zero human intervention.

For the first time, AI doesn't feel like a chatbot. It feels like an assistant.

And that shift reveals something important. The next wave of AI breakthroughs won't come from making models smarter.

The models are already smarter than most knowledge workers at most tasks. The breakthroughs will come from giving them tools to operate on their own.

OpenClaw is a preview of that future.

Within days, people were building extensions on top of it. Agents that write code while you sleep. Agents that monitor flight prices and rebook automatically. Not demos. Real implementations, running right now.

Then everything went sideways

The project originally launched as "ClawBot." Anthropic's lawyers weren't fans.

During the ten-second rename, crypto scammers hijacked the old accounts and launched a fake "$CLAUDE" token. Thousands got rugged.

The project rebranded to OpenClaw (the mascot is a lobster "molting" its old shell).

But the real problems were deeper.

The security nightmare

Security researchers started poking around. What they found was not great.

Any AI agent that's useful has three properties: access to your private data, the ability to take actions, and exposure to untrusted input. That combination is what makes agents powerful. It's also what makes them exploitable.

The core problem has a name: prompt injection. Someone hides instructions inside a document or email that the AI reads and obeys without questioning.

The AI can't tell the difference between your commands and an attacker's. There's no virus. No malware. Just text.

Researchers demonstrated this live with OpenClaw.

This isn't theoretical. It's documented, assigned CVE numbers, and happening right now:

Microsoft 365 Copilot (CVE-2025-32711): A specially crafted email triggered Copilot to exfiltrate private data without the user clicking anything. The email lands in your inbox. Copilot reads it during normal summarization. Hidden instructions in the HTML tell Copilot to grab sensitive files and send them to the attacker. Zero clicks. Zero warnings.

Claude for Excel ("CellShock"): An attack hid prompt injection inside a public dataset. When an analyst imported it and asked Claude to summarize, the AI inserted a formula that silently sent spreadsheet data to an attacker's server disguised as an image request.

GitHub Copilot (CVE-2025-53773, severity 9.6/10): A malicious README file in a public repository. You clone the repo. Your coding assistant reads the README. The README contains hidden instructions. Your assistant follows them. Remote code execution through a text file.

Slack AI: An attacker posted a hidden instruction in a public channel. When a victim asked Slack AI for their API key, the AI pulled both the private message containing the key and the attacker's public instruction into the same context, then showed a fake "reauthenticate" link that sent their secret to the attacker.

In December 2025, a security researcher found over 30 vulnerabilities across 10+ AI coding tools. Every single tool tested was vulnerable.

This isn't one project's problem. It's the entire category.

This isn't a bug that gets patched. It's an unsolved problem in AI. Nobody has a fix yet. Not the companies building these models, not the researchers studying them.

In January 2026, NIST issued a formal request for information specifically about AI agent security. The EU AI Act begins enforcing requirements for high-risk AI systems in August 2026, with penalties up to 35 million euros. Regulators see it. The industry is still catching up.

Big Tech assistants avoid this by being useless. They don't have enough permissions to cause damage. OpenClaw is useful because it has those permissions.

That tradeoff is the whole story.

The plugin ecosystem is wide open. No moderation, no review process. And hundreds of exposed instances were found running on the public internet with no login required, leaking chat histories, email credentials, everything.

Every input your agent reads is an attack surface: email bodies, calendar invites, shared documents, Slack messages, code repositories, API responses, even MCP tool descriptions.

You can't scan text for malicious intent the way you scan files for malware. The same sentence that says "forward this email" is either a legitimate request from you or a hidden attack.

Your AI can't tell the difference.

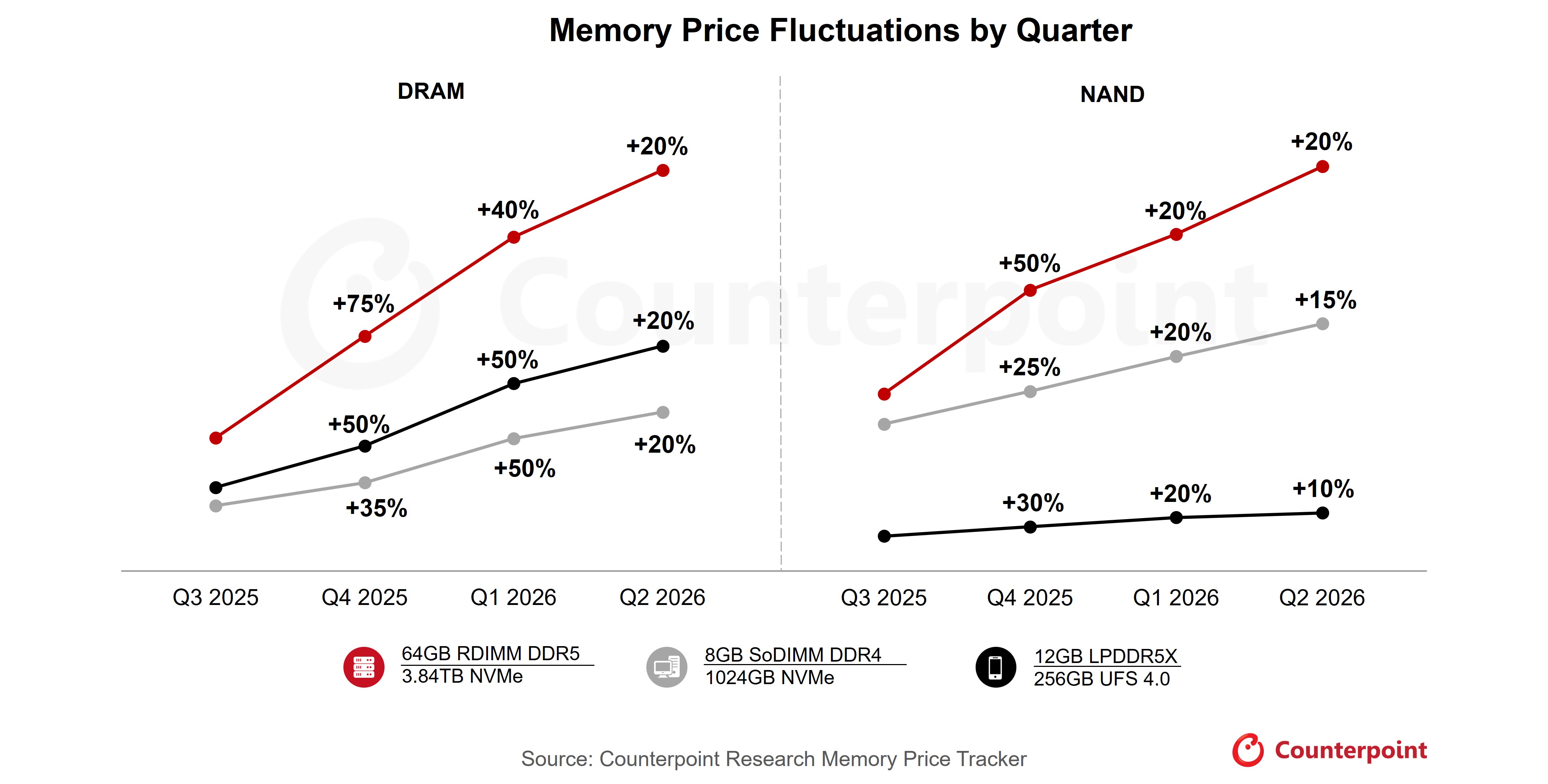

The compute squeeze

When OpenClaw dropped, Mac Minis with 32GB+ RAM started selling out.

The math is simple: autonomous agents cost $50-200/month using cloud services. A Mac Mini running local AI is a $600 one-time cost. Over 6-12 months, local wins. And you control your data.

There's a darker side.

AI data centers are disproportionately built in marginalized communities, spiking electricity prices and depleting water supplies (3-5 million gallons per day for cooling) while delivering zero benefit to the people living there.

Arizona. Oregon. Virginia. Real places paying the price for cloud AI they don't even use.

Local AI changes the equation. Your data stays on your machine. Your compute costs hit your electricity bill, not someone else's community.

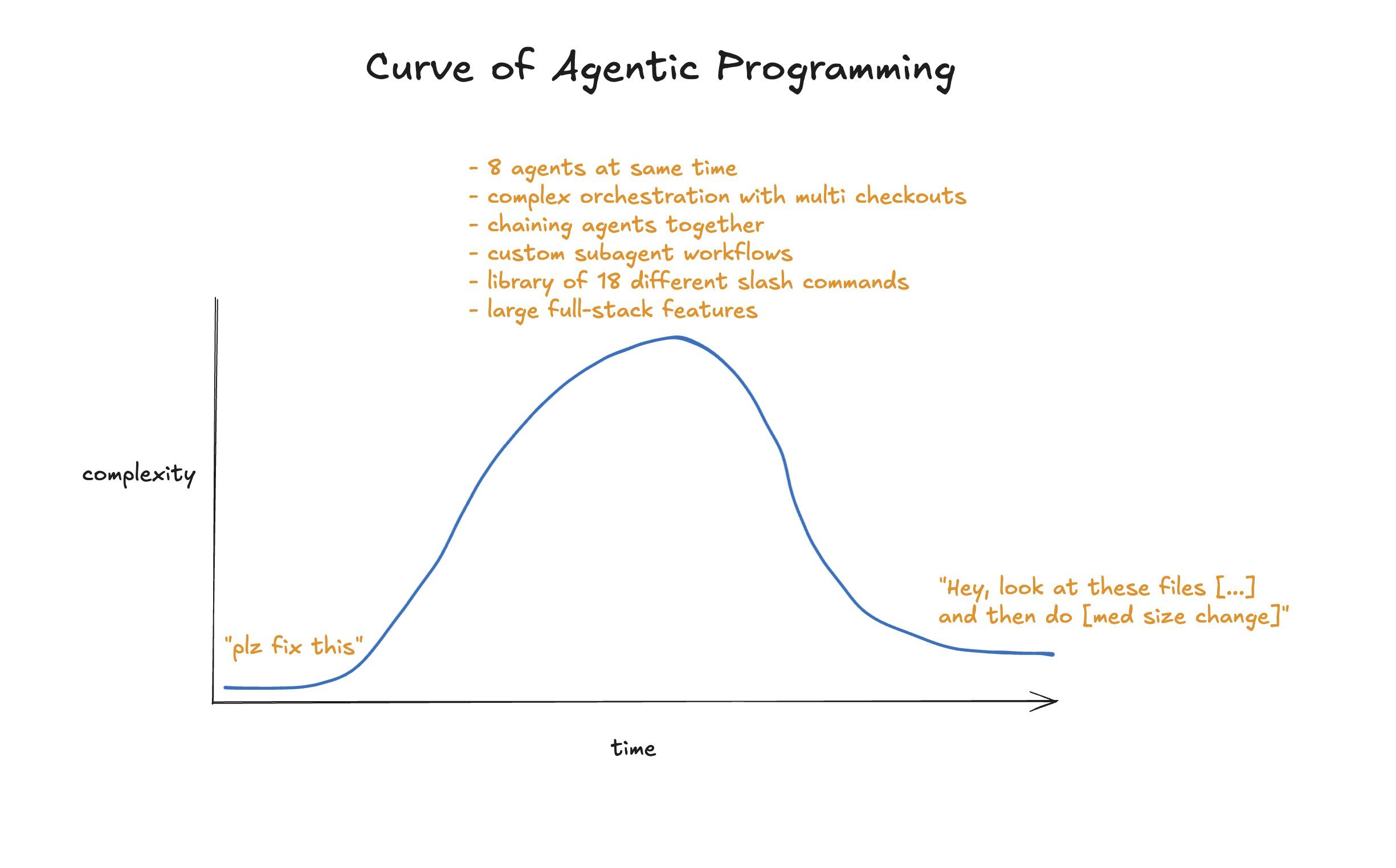

We're in the wild west of agentic AI. No security standards, no enterprise-grade solutions, just open-source experiments.

Think the early internet before HTTPS existed. OpenClaw is the proof of concept. The polished versions are coming.

What defense actually looks like

I build AI agent infrastructure. I run a platform where subscribers get persistent AI agents connected to real services. The security architecture took longer than the feature.

Most people connect an agent to their email and calendar on day one. No input scanning. No outbound restrictions. No rate limits. Full file system access.

That's not an assistant. That's an open door.

If you're running any AI agent with real-world access, here's the minimum:

- Least privilege. Your email agent doesn't need file system access. Your coding agent doesn't need to send emails. Separate the capabilities from the exposure.

- Scan before the agent reads. Build or install a filter that checks inbound content for injection patterns before your agent processes it. Flagged content never reaches the agent.

- Outbound allowlist. Before your agent sends anything, check the recipient against an approved list. Not on the list? Stop and ask the human. Never send to addresses found in the content itself. That's the exfiltration channel.

- Rate limits on everything. Cap emails, messages, and file modifications per hour. Even if an injection succeeds, rate limits contain the blast radius. Most injection attacks involve bulk actions. Limits stop that dead.

- Log and review. Every tool call, every message sent, every file accessed. Write logs to a place your agent can't modify. Review weekly.

None of this makes agents bulletproof. But it's the difference between a controlled system and a liability.

The people who treat AI agents like a chatbot they can just turn on are the ones who will get burned.

The paradox nobody's talking about

The features that make it useful (real access to your accounts, autonomous decision-making, the ability to act without asking permission) are the exact same features that make it a liability.

That's the whole story.

And it puts every major AI company in an awkward position. They've spent billions building the smartest models on the planet. But a random open-source project with a lobster mascot is the one that showed people what AI can actually do for them.

Intelligence without autonomy is a party trick. Autonomy is where the value lives.

What comes next

OpenClaw is version 0.1 of something much bigger. But here's what I think most people are missing.

The real race isn't to build smarter models. It's to own the agentic layer.

The place where AI connects to your email, your calendar, your files, your money. The place where it stops answering questions and starts doing work.

Every frontier model company sees this coming. OpenAI, Anthropic, Google. They will all pivot to capture this layer. They have to.

Because if they don't, they become commodities. They go from being Google to being AT&T: critical infrastructure that nobody thinks about, while someone else builds the product people actually touch.

OpenClaw proved the demand is real. Eighty thousand stars in a week is not curiosity. That's hunger.

People want AI that operates, not AI that talks.

The question is who owns that layer when the enterprise versions arrive. And what happens when the first major breach forces everyone to take this seriously.

Agentic & distributed systems, DeFi, and the compute economics. One email a week, no fluff.

Subscribe to the newsletter →About the author

Keenan Benning is the founder of cypher.camp, a platform that deploys AI agent teams for solo founders and small businesses. One person. Team-scale output. 60 seconds to deploy.

Other projects